The mystery of mini-recessions: The dog that didn’t bark

[If you haven’t already, read the previous post first.]

In this post I plan to define mini-recessions and then discuss why they are so mysterious and why they might offer the key to macroeconomics. Then I’ll offer an explanation for the mystery.

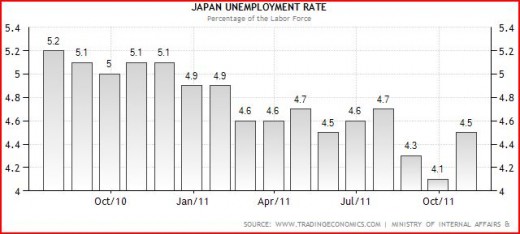

To understand mini-recessions we first need to understand the monthly unemployment data collected by the Bureau of Labor Statistics. This data is based on large surveys of households. It seems relatively “smooth,” rising and falling with the business cycle. Month to month changes, however, often show movements that seem “too large” by 0.1% to 0.3%, relative to the other underlying macro data available (including the more accurate payroll survey.) So let’s assume that once and a while the reported unemployment rate is about 0.3% below the actual rate. And once in a great while this is followed soon after by an unemployment rate that is about 0.3% above the actual rate. Then if the actual rate didn’t change during that period, the reported rate would rise by about 0.6%.

I searched the post war data, which starts at 1948, and covers 11 recessions. During expansions I found only 12 occasions where the unemployment rate rose by more than 0.6%. In 11 cases the terminal date was during a recession. In other words, if you see the unemployment rate rise by more than 0.6%, you can be pretty sure we are entering an recession. The exception was during 1959, when unemployment rose by 0.8% during the nationwide steel strike, and then fell right back down a few months later. That’s not called a recession (and shouldn’t be in my view.) Oddly, unemployment had risen by exactly 0.6% above the Bush expansion low point by December 2007 (when the current recession began) and by 0.7% by March 2008, and yet many economists didn’t predict a recession until mid-2008, or even later.

What’s my point? That fluctuations in U of up to 0.6% are generally noise, and don’t necessarily indicate any significant movement in the business cycle. But anything more almost certainly represents a recession.

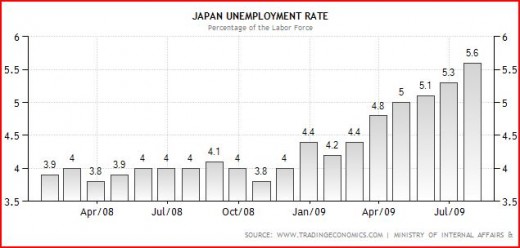

Now here’s one of the most striking facts about US business cycles. When the unemployment rate does rise by more than 0.6%, it keeps going up and up and up. With the exception of the 1959 steel strike, there are no mini-recessions in the US. The smallest recession occurred in 1980, when the unemployment rate rose 2.2% above the Carter expansion lows. That’s a huge gap, almost nothing between 0.6% and 2.2%.

It’s often said that nature abhors a vacuum. I’d add that nature abhors a huge donut hole in the distribution of “shocks.” Suppose there were lots of earthquakes of zero to six magnitude. And occasional earthquakes of more than seven. And even fewer earthquakes of more than 8 magnitude. But nothing between 6 and 7. Wouldn’t that be very odd? I can’t imagine any geological theory capable of explaining such a gap. We normally expect shocks to become less and less common as we move to larger scales. And to some extent that’s true of recessions. The Great Depression is unique in US history. Big recessions like 1893, 1982, and 2009 (roughly 10% unemployment) are rarer than common recessions. So why no mini-recessions? (Or almost none, if we are going to count the 1959 downturn as a mini-recession.)

I don’t see why other macroeconomists are not obsessed with this issue. Why isn’t there a Journal of Mini-Recessions? I suppose some smart-alec commenter will say; “because there’s nothing to study, stupid.” But he’d be missing the point; just like the dog that didn’t bark in that Sherlock Holmes tale, the lack of mini-recessions may provide the explanation to the business cycle.

(Or is there such a field, and I just don’t know about it?)

Let’s start with real theories of the cycle. I can’t imagine any plausible real theory that didn’t predict lots more mini-recessions than actual recessions. After all, aren’t modest size real shocks (i.e. those capable of raising the unemployment rate by 1.0% to 2.0%) much more common than really big real shocks, capable of raising unemployment by more than 2.0%?

Of course one could say the same thing about nominal shocks, wouldn’t you expect modest-sized nominal shocks to be more common than big nominal shocks? Yes, but I still think the lack of mini-recessions points to nominal shocks (or monetary policy) as being the culprit.

Let’s try to construct a “just so story” to explain why recessions are always fairly big, and then look for evidence to support the story. Suppose you have the following conditions:

1. There is a data lag of a couple months.

2. There is a recognition lag of a few months. This is the time between when the data comes in and the Fed recognizes that a new trend is developing.

3. When the Fed does recognize problems, it reacts in a “responsible and deliberative fashion,” it doesn’t change policy drastically, in a move that appears panicky.

4. The Fed targets nominal interest rates.

5. When the Wicksellian equilibrium rate is below above the market rate the economy expands at trend or above.

6. The Fed can’t directly observe the Wicksellian equilibrium rate, and tends to gradually nudge rates higher as the economy approaches full employment, and seems in danger of overheating. Or as inflation rises above target, and appears in danger of affecting inflation expectations.

7. At some point the target rate is nudged above the Wicksellian equilibrium rate, but the Fed doesn’t know this initially.

8. When the economy turns into recession the Wicksellian equilibrium rate falls fairly rapidly. Even after the Fed begins cutting rates the market rate will be above the equilibrium rate for several months. Hence monetary policy stays “contractionary” for several months after the Fed realizes a recession may be developing.

Put all that together and you get contractions that could easily last for 9 months to a year, even if Fed policy is attempting to be countercyclical. BTW, you could tell a similar story with money supply targeting, as velocity tends to fall on its own accord during contractions.

OK, but is there any evidence for my just so story? Maybe a bit. Let’s start with the peculiar 1980 recession, the mildest in the post war period. I recall in mid-1980 thinking that Carter was toast, and that the recession would be as bad as 1974-75. The Fed had raised rates to about 15% in late 1979. Unemployment soared in the spring of 1980. But then it suddenly stopped rising, leaving the recession the mildest on record. What explains this turnabout? There are two possible answers. First, this was one of the few periods where the Fed wasn’t targeting interest rates. But I don’t think that tells the whole story. Rather it was the Fed’s willingness to do an extraordinary about face in policy, and ignore interest rates.

We know from the Fed minutes that they generally don’t like to suddenly reverse course; it makes them look bad. It makes the previous decision look foolish. So during recessions they cut rates gradually, in a “responsible and deliberative fashion.” But not in 1980. The 3 month T-bill yield plunged from 15.2% in March to 7.07% in June. That’s more than 800 basis points in 3 months! And that immediately ended the recession. The unemployment rate had ended 1979 at 6.0%, and then soared to 7.8% in July 1980. But that was it; the rate immediately started falling, as the Fed stimulus (which pushed nominal interest rates well below the inflation rate) caused NGDP to soar at an annual rate of 19% in late 1980 and early 1981.

So that explains why the 1980 recession was so short. But what of the longer than average recessions like 1982 and 2009? In mid-1981 Volcker realized that the previous “tight money” policy had failed and more draconian medicine was needed. So the Fed tightened policy and kept it tight even after it was clear we were in recession. Volcker kept it tight until inflation fell to about 4%. So the 1982 recession was longer and deeper than normal because it was a rare case where the Fed wanted a longer and deeper recession.

In 2009 the Fed would have preferred a milder recession, but they weren’t able to use their preferred interest rate instrument to spur the economy. So the “liquidity trap” can explain this one. But most contractions are about 9 to 12 months, which I think fits my model just so story pretty well. In some cases like 1974, the first part of the recession is arguably not a recession at all, as unemployment rose only a tiny amount. Rather it was a sluggish economy produced by the oil shocks and price controls. The severe phase of the recession (when unemployment soared) was in late 1974 and early 1975, and was pretty short.

To summarize, I can’t even imagine a non-monetary theory that explains the lack of mini-recessions. RBC, RIP. Bye bye to blaming ObamaCare. So much for the sub-prime bubble. But I can imagine a plausible theory of how inertial central banks that target nominal rates and observe the macroeconomy with a lag might occasionally produce short contractions, typically 9 to 12 months. I recall that in both the 1991 and 2001 recessions it wasn’t until about 6 months in that the consensus of economists even forecast a recession. A few months later the contraction was over. And I believe this theory can also account for the occasional recession that is slightly shorter or longer.

Also note that it’s a post-war US theory only. I’d expect mini-recessions in small, less diversified economies, and perhaps even in the US prior to WWII, when we had a different monetary regime. Unfortunately we lack comprehensive monthly unemployment data from before WWII.

PS. Grad students who are interested might want to compare this theory to the Romer and Romer narrative of Fed decisions. There might be some overlap.

PPS. The two occasions where the U-rate rose by 0.6% with no recession were 1957 and 1960. In both cases we were officially in recession within 2 months. So maybe 0.5% is the limit of randomness.

PPPS. I discussed one short recession (6 months) and three long ones (16, 18, 18 months) in this post. The other 8 post-WWII recessions were all within 8 to 11 months long. There’s probably a reason for that, and I’d guess it has something to do with monetary policy.